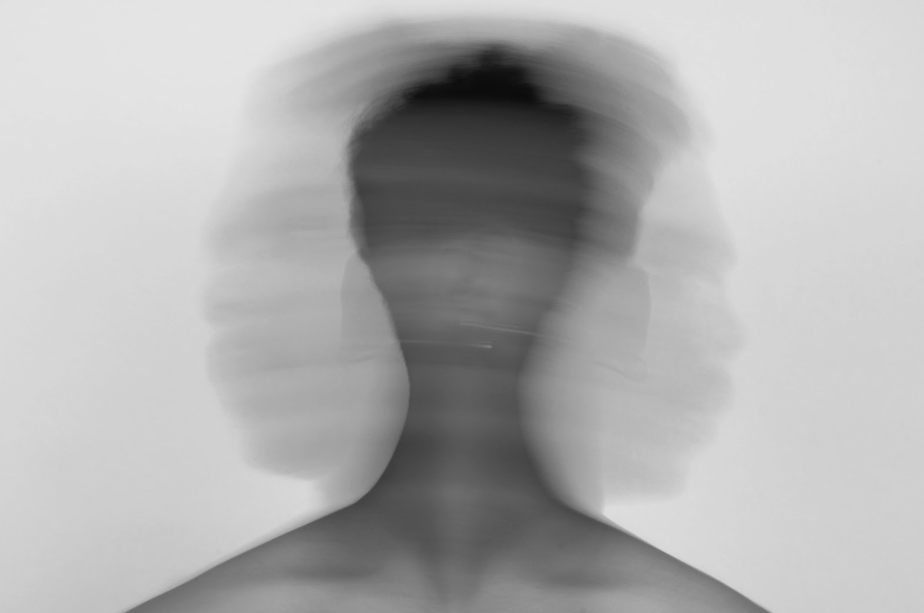

Imagine a hypothetical war where videos emerged of the leader of one participating party calling for the widespread surrender of their own soldiers. Imagine this clip spread like wild fire across social media, creating mass panic, outrage, and confusion amongst the afflicted party. No, this is not the plot of some abstract science fiction novel; in fact, this is reality. Last year, a convincing deepfake video of Ukrainian President Volodymyr Zelensky surfaced, ordering troops to stand down in the face of a Russian invasion. Only after millions watched, liked and shared this clip did fact-checkers debunk it. By that time, Russian propaganda had already succeeded. If a fake video can shake a country in the middle of war, it’s not hard to imagine how Artificial Intelligence (AI) could fracture the foundations of truth at home. Deepfakes are real enough to mislead millions, and that should make all of us uneasy. AI is eroding the foundation of civic life: a shared sense of reality.

Democracy relies on disagreement. As James Madison points out in Federalist No. 10, the chafing of factions against each other is what, ultimately, promotes the public good. However, disagreement is only productive if citizens can share a baseline understanding of facts. We could argue about policy and culture, but only if we can agree broadly on what actually happened. Deepfakes destroy that baseline. Video evidence, once considered the most concrete of proofs, now carries an asterisk: Maybe it is fake. Once we start doubting everything, debate lessens to stalemate, trust in institutions weaken, and the public retreats into echo chambers where confirmation replaces verification.

Yet, the real threat is not that people believe fake information; instead, it’s that they will stop caring about whether anything is true. Political deepfakes like the Zelenskyy clip show that certainty can never be absolute. Audiences from now on will ask: How do I know this is not AI-generated? A recent study by Mimecast found American trust in online content is down 78% in the last six months. Skepticism has spread faster than fact-checking could ever keep up. It has become the default.

This problem is not confined to foreign politics. Domestic news, protest footage, war reporting, and even investigative journalism all rely on a shared sense of reality. In September 2025, a video circulated online that appeared to show items being thrown from the White House window, only for President Trump to dismiss the footage as “probably AI-generated.” With the evolution of AI, deepfakes will become the most convincing of scapegoats and institutions will continue to lose authority. Truth will become completely tribal: believe what reinforces your pre-existing ideas and dismiss what challenges them. This kind of epistemic tribalism accelerates polarization, making compromise nearly impossible because each side believes the other is not just wrong but fundamentally delusional.

Some argue that society will adapt with AI. Verification tools and media literacy programs could blunt the worst effects. But, technology alone cannot restore the trust of society when broken. This trust is a matter of cultural norms and habits, and once these habits erode, restoring them takes decades. Consider the deep mistrust among Americans who came of age during the Watergate era. This distrust has cascaded down family lines leading to a low trust level across all generations. In the meantime, collective action will still, causing a lack of acting all-together and contribute to the polarization that is already so prevalent in society.

If deepfakes teach us anything, it is that truth is fragile and requires defense. Institutions will be forced to adapt by committing to transparency and verification. Individuals will be forced to adapt by resisting reflexive outrage and demanding evidence before forming conclusions. A society that cannot agree on what is real simply can not agree on what to do.

Categories: Culture

strong to very strong

LikeLike